Readings Newsletter

Become a Readings Member to make your shopping experience even easier.

Sign in or sign up for free!

You’re not far away from qualifying for FREE standard shipping within Australia

You’ve qualified for FREE standard shipping within Australia

The cart is loading…

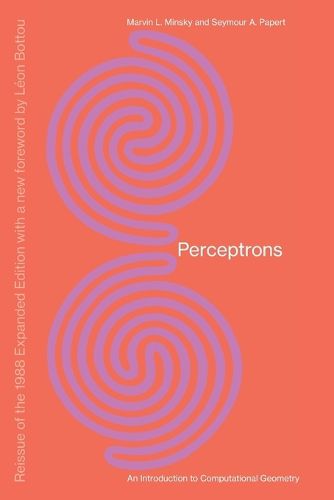

The first systematic study of parallelism in computation by two pioneers in the field.Reissue of the 1988 Expanded Edition with a new foreword by Leon Bottou In 1969, ten years after the discovery of the perceptron-which showed that a machine could be taught to perform certain tasks using examples-Marvin Minsky and Seymour Papert published Perceptrons, their analysis of the computational capabilities of perceptrons for specific tasks. As Leon Bottou writes in his foreword to this edition, Their rigorous work and brilliant technique does not make the perceptron look very good. Perhaps as a result, research turned away from the perceptron. Then the pendulum swung back, and machine learning became the fastest-growing field in computer science. Minsky and Papert’s insistence on its theoretical foundations is newly relevant. Perceptrons-the first systematic study of parallelism in computation-marked a historic turn in artificial intelligence, returning to the idea that intelligence might emerge from the activity of networks of neuron-like entities. Minsky and Papert provided mathematical analysis that showed the limitations of a class of computing machines that could be considered as models of the brain. Minsky and Papert added a new chapter in 1987 in which they discuss the state of parallel computers, and note a central theoretical challenge- reaching a deeper understanding of how objects or agents with individuality can emerge in a network. Progress in this area would link connectionism with what the authors have called society theories of mind.

$9.00 standard shipping within Australia

FREE standard shipping within Australia for orders over $100.00

Express & International shipping calculated at checkout

Stock availability can be subject to change without notice. We recommend calling the shop or contacting our online team to check availability of low stock items. Please see our Shopping Online page for more details.

The first systematic study of parallelism in computation by two pioneers in the field.Reissue of the 1988 Expanded Edition with a new foreword by Leon Bottou In 1969, ten years after the discovery of the perceptron-which showed that a machine could be taught to perform certain tasks using examples-Marvin Minsky and Seymour Papert published Perceptrons, their analysis of the computational capabilities of perceptrons for specific tasks. As Leon Bottou writes in his foreword to this edition, Their rigorous work and brilliant technique does not make the perceptron look very good. Perhaps as a result, research turned away from the perceptron. Then the pendulum swung back, and machine learning became the fastest-growing field in computer science. Minsky and Papert’s insistence on its theoretical foundations is newly relevant. Perceptrons-the first systematic study of parallelism in computation-marked a historic turn in artificial intelligence, returning to the idea that intelligence might emerge from the activity of networks of neuron-like entities. Minsky and Papert provided mathematical analysis that showed the limitations of a class of computing machines that could be considered as models of the brain. Minsky and Papert added a new chapter in 1987 in which they discuss the state of parallel computers, and note a central theoretical challenge- reaching a deeper understanding of how objects or agents with individuality can emerge in a network. Progress in this area would link connectionism with what the authors have called society theories of mind.